Why AI Systems Need Finite Behavioral Envelopes

Why AI Systems Need Finite Behavioral Envelopes

AI Systems Without Boundaries Are Not Engineering

In the first article of this series, I argued that Artificial Intelligence (AI Systems) is entering engineered systems along two different architectural paths. Therefore, path one, AI remains a bounded instrument inside a defined system architecture. Then path two becomes the driver of system behavior. That distinction matters because it changes verification, authority, and responsibility.

The next question follows naturally: what makes one path still engineering, while the other begins to drift away from it?

The answer is boundaries.

Engineering does not begin with impressive output. It begins when a function is defined strongly enough to be understood, evaluated, and validated. If a system interacts with the physical world, its behavior must remain finite enough to describe, bounded enough to test, and stable enough to verify. Without those conditions, the language of engineering becomes weaker than the claim being made.

Engineering Requires a Finite Behavioral Domain

A system is not engineered simply because it performs a useful task. Engineering requires a finite behavioral domain.

That means engineers must be able to answer a basic question: within what class of situations is this function intended to operate? If that question cannot be answered clearly, then verification has no stable object. The system may still function in some cases, but its legitimate operating scope remains undefined.

This is especially important for Artificial Intelligence. Once AI participates in perception, classification, diagnostics, control support, or other operational roles, its valid domain must be delimited. What inputs belong inside the function’s intended use? What conditions, transitions, or edge cases are assumed? Which situations lie outside the claim?

Without that structure, performance claims remain open-ended. And when the claim is open-ended, the system is no longer being treated as a bounded engineering object.

The Operational Envelope Is Not Optional - AI Systems

Every engineered function operates within an envelope, whether or not the documentation is strong enough to show it.

Traditionally, engineers define envelopes through speed, load, temperature, pressure, voltage, timing, and environmental conditions. AI-enabled systems do not escape this requirement. Instead, the requirement becomes harder.

Now the envelope may also include scene ambiguity, data quality, feature distributions, sensor confidence, interaction timing, uncertainty thresholds, and degraded-state assumptions. These may be more difficult to define than classical physical limits, but they are still limits.

That is the key point. Difficulty does not remove obligation.

If Artificial Intelligence is expected to influence system behavior, then the system’s operational envelope must still be described in a form that engineers can review, challenge, and validate. Otherwise, “real-world deployment” becomes only a vague expression for behavior that has not yet been properly bounded.

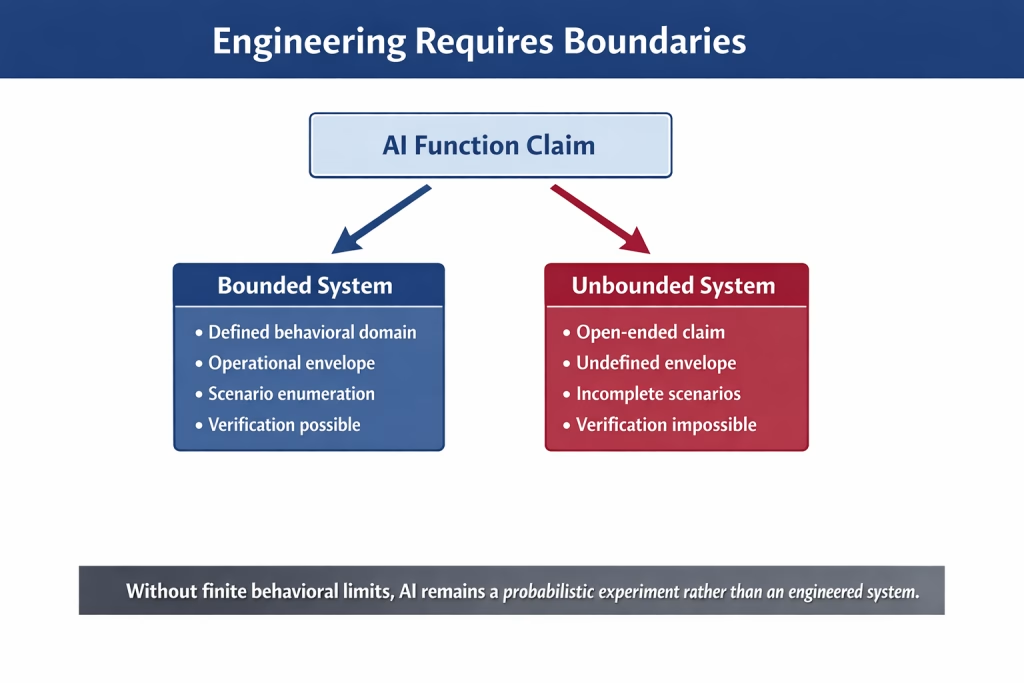

Figure 1. Engineering requires bounded behavioral domains: defined envelopes and enumerated scenarios make verification possible; unbounded claims do not.

Scenario Enumeration Creates the Basis for Verification

Once engineers define the behavioral domain and operational envelope, the next task is scenario enumeration.

Verification does not begin by exposing a system to many examples and hoping the coverage is good enough. It begins by asking what situations matter and what counts as correct behavior in each one.

Normal cases matter, but they are never enough. Boundary cases matter. Transition states matter. Degraded conditions matter. Rare but safety-relevant situations matter. If these are not deliberately enumerated, then completeness cannot even be discussed.

This is why scenario enumeration is not a bureaucratic exercise. It is the bridge between functional intent and verification logic. Without it, testing becomes broad but shallow. A system may appear well exercised while still missing the very states that most threaten safety, function continuity, or responsibility.

For engineers, that is not a minor weakness. It is a structural gap.

Why Probabilistic Capability Is Not Enough

A common defense of unbounded AI claims points to the complexity of the real world and argues that engineers cannot fully enumerate it. That point contains some truth, but people often use it carelessly.

Engineering has never required omniscience. It requires boundedness.

The goal is not to describe all of reality. The goal is to constrain the function enough to let engineers finitely describe relevant behavior within the intended domain. If a claimed AI function casts its scope so broadly that engineers cannot meaningfully bound its expected behavior, then the problem does not lie with engineering. The problem lies with the way the function has been framed, outside the conditions that engineering requires.

At that point, performance rhetoric becomes dangerous. Strong statistical output does not replace defined boundaries. Useful inference does not replace structured validation. A system may appear capable while still lacking the conditions needed for engineering assurance.

At that stage, calling it advanced is no longer enough. A more honest description is this: it remains a probabilistic experiment operating in reality.

Verification Completeness Depends on Limits

Verification completeness does not mean engineers have tested every real-world variation. It means they have bounded the intended function clearly enough to identify and evaluate the relevant cases.

That is why limits matter.

When the system boundary is clear, engineers can define success conditions, degraded behavior, failure behavior, and escalation logic. They can also decide which parts of the architecture remain deterministic, which assumptions require periodic revalidation, and where authority must remain explicit. As Article 1 showed, these architectural choices determine whether AI remains a bounded instrument or becomes the effective driver of system behavior.

When the system boundary is unclear, verification loses coherence. Testing may continue, data may accumulate, and performance may improve, but engineers can no longer make a convincing case for completeness because they have not fully defined the object of verification.

Without limits, verification becomes activity without closure.

The Practical Questions Engineers Must Ask

Before engineers accept an AI-enabled function as operationally credible, they should answer several basic questions: What bounded function is being claimed? What conditions define its valid behavioral envelope? Which scenarios have been enumerated? What counts as success, degradation, and failure? Which parts of the system remain deterministic by design? Who keeps authority when model output is uncertain, conflicting, or incomplete? And which assumptions require revalidation as data, software, and operating conditions evolve?

These questions are not implementation details; they form the grammar of engineering control. If engineers cannot answer them, the system may still attract commercial interest, but it has not yet earned full engineering legitimacy. Greater model performance will not solve that problem. Only sound architecture, clear boundedness, and disciplined verification can.

Boundaries Are What Make AI Engineerable

This article does not argue against Artificial Intelligence. It argues against boundaryless claims about Artificial Intelligence.

There is a serious place for AI inside engineered systems. However, that place depends on finite behavioral domains, explicit operating assumptions, scenario-based validation, defined authority, and ongoing revalidation over time. These are not obstacles to innovation. They are the conditions that make technical capability engineerable.

So the central question is not whether AI is powerful. It clearly is. The real question is whether the claimed function remains bounded strongly enough to be treated as an engineering object rather than as an open-ended experiment.

Without boundaries, there is no meaningful completeness. Without completeness, there is no defensible verification. And without verification, the system may still operate, but it has not yet earned the full language of engineering.

The integration of Artificial Intelligence into complex systems ultimately becomes an architectural question: whether the system remains bounded, verifiable, and governed by clear responsibility.

Conclusion: Artificial Intelligence (AI Systems)

Artificial Intelligence does not become part of an engineered system simply because it produces useful results. It becomes engineerable only when engineers bound its function, define its operating assumptions, enumerate its relevant scenarios, and evaluate its behavior within a finite verification framework. Those conditions do not restrict engineering. They make engineering possible.

For that reason, engineers should not focus first on whether AI is powerful, adaptive, or commercially promising. They should ask whether the claimed function stays within a defined behavioral envelope that they can understand, validate, and govern. If it does, then they can integrate AI into a disciplined system architecture. If it does not, the system may still operate, but it no longer rests on a complete engineering foundation. It becomes, in effect, a probabilistic experiment deployed into reality.

Engineering has always required more than performance. It requires boundaries, traceability, and verification. The same standard must govern Artificial Intelligence. Without finite behavioral limits, engineers cannot establish meaningful completeness. Without completeness, they cannot defend validation. And without validation, the system has not earned the full language of engineering.

That is the dividing line: AI systems without boundaries are not engineering.

References

Article 1: Artificial Intelligence in Engineered Systems: Driver or Bounded Instrument?

The official NIST framework page for trustworthy AI risk management and links to the framework resources.:

Copyright Notice

© 2026 George D. Allen.

All rights reserved. No portion of this publication may be reproduced, distributed, or transmitted in any form or by any means without prior written permission from the author.

For editorial use or citation requests, please contact the author directly.

About George D. Allen Consulting:

George D. Allen Consulting is a pioneering force in driving engineering excellence and innovation within the automotive industry. Led by George D. Allen, a seasoned engineering specialist with an illustrious background in occupant safety and systems development, the company is committed to revolutionizing engineering practices for businesses on the cusp of automotive technology. With a proven track record, tailored solutions, and an unwavering commitment to staying ahead of industry trends, George D. Allen Consulting partners with organizations to create a safer, smarter, and more innovative future. For more information, visit www.GeorgeDAllen.com.

Contact:

Website: www.GeorgeDAllen.com

Email: inquiry@GeorgeDAllen.com

Phone: 248-509-4188

Unlock your engineering potential today. Connect with us for a consultation.