Artificial Intelligence and the Missing Architecture of Responsibility

Artificial Intelligence and the Missing Architecture of Responsibility

From Boundaries to Responsibility

In the first article of this series, I argued that Artificial Intelligence enters engineered systems along two architectural paths: as the driver of system behavior or as a bounded engineering instrument. In the second article, I argued that engineering requires finite behavioral boundaries. The next question follows directly: who remains responsible when AI influences system behavior?

That question is not only legal, and it is not something to answer after failure. It is an architectural question. If AI participates in decisions that affect physical outcomes, then engineers must define where authority resides, who approves activation, who owns runtime judgment, and who acts when behavior changes or drifts.

Without that structure, the system may still operate. However, it operates inside an accountability gap.

Responsibility Must Exist Before Deployment

Many organizations still treat responsibility as something they can reconstruct later. The system acts, an event occurs, and then someone investigates, assigns blame, or writes corrective language. Engineering cannot depend on that sequence.

Responsibility must exist before deployment in a form that can be traced through the architecture itself. If a system can influence the real world, then someone must already own the activation decision, the operating assumptions, the escalation logic, and the intervention path.

That is the core issue. Responsibility does not begin after failure. It begins before the system acts.

This is why strong performance is not enough. A model may appear capable and still sit inside an organization that has not clearly assigned authority. When that happens, technical sophistication can mask structural irresponsibility.

The First Missing Element: Activation Authority

The first missing element is activation authority.

Before an AI-enabled function becomes active in an operational system, someone must decide under what conditions it may act, recommend, intervene, or control. That decision cannot float between software, testing, product language, and vague references to “the system.”

Activation authority means naming the person, role, or governed mechanism that allows the function to operate in a real deployment context. It also means defining the evidence required before activation is justified.

A system should not activate simply because the model appears powerful. It should activate only after engineers and decision-makers review the function, the assumptions, the limits, the fallback logic, and the authority boundaries. If no one clearly owns that decision, then the organization has not fully governed the function it is releasing.

The Second Missing Element: Runtime Decision Validation

The second missing element is runtime decision validation.

Many organizations say they keep a human in the loop. That phrase sounds reassuring, but it often hides the real issue. The important question is not whether a human is somewhere nearby. The important question is whether the runtime decision chain preserves meaningful review, explicit override authority, and clear ownership of the action taken.

A person who passively approves model output without understanding its basis does not exercise real authority. A reviewer who cannot see assumptions, confidence limits, conflicting inputs, or degraded states does not truly validate anything.

The architecture must define what the reviewer sees, what the reviewer owns, what evidence accompanies the recommendation, and what happens if the reviewer rejects or overrides it. Otherwise, human oversight becomes a ceremonial phrase instead of a real control structure.

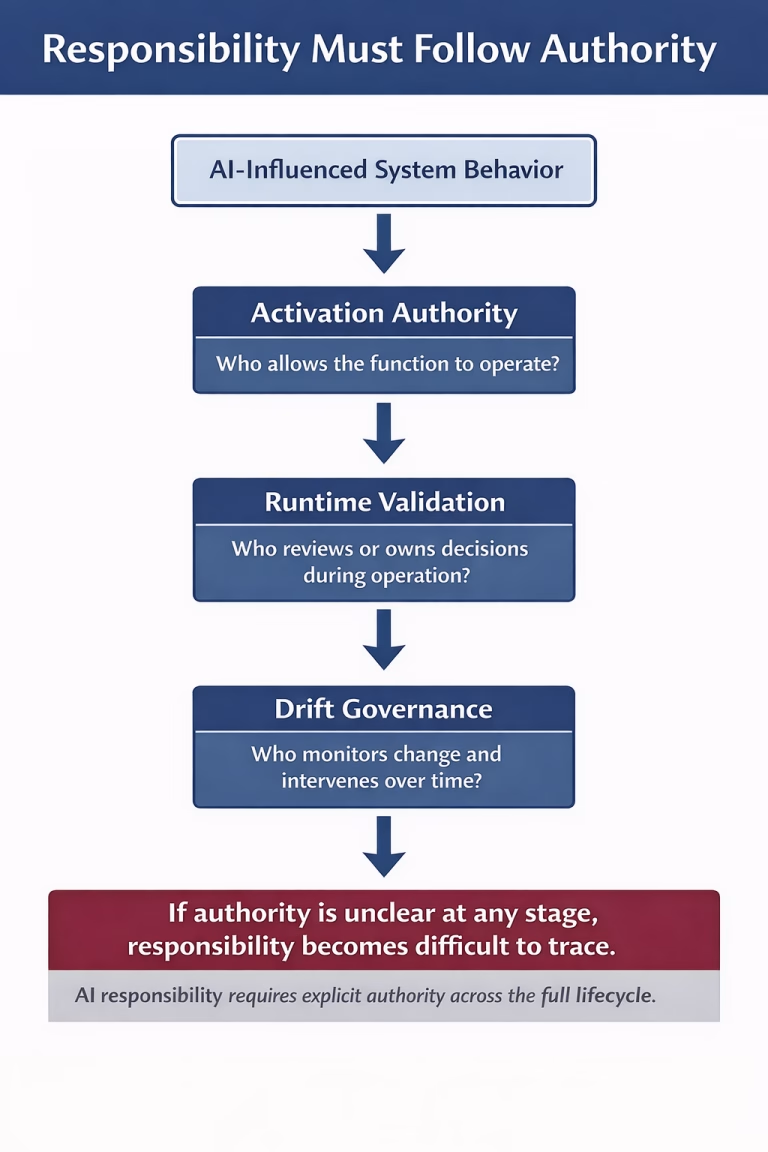

Figure 1. Responsibility in AI-enabled systems must remain traceable across activation, runtime decision validation, and drift governance.

The Third Missing Element: Drift Governance

The third missing element is drift governance.

Even a bounded AI-enabled system can drift over time. Data distributions change. Sensor conditions shift. Software updates alter interaction effects. Operating contexts expand beyond original assumptions. A function that looked trustworthy during validation may become less trustworthy later, even before anyone sees an obvious failure.

That is why responsibility cannot stop at launch. Engineers and organizations must define who monitors drift, what indicators trigger concern, what thresholds require revalidation, and who holds authority to reduce scope, suspend operation, or roll back the function.

If no one owns drift, then the organization has treated responsibility as a release milestone instead of a lifecycle obligation. In AI-enabled systems, that is not enough.

Why This Matters in SDV and Autonomous Systems - Artificial Intelligence

This issue becomes especially important in software-defined vehicles and other autonomous or semi-autonomous systems.

In traditional systems, authority often aligned more visibly with hardware functions, fixed logic, and release-specific validation. In software-defined architectures, behavior increasingly depends on distributed logic, updates, interfaces, and interaction across modules. That flexibility can improve capability, but it can also dilute ownership.

The problem becomes even sharper in autonomous systems. The more a system shifts from assistance to behavioral determination, the more dangerous vague responsibility becomes. Engineers cannot accept the phrase “the system decided” as a substitute for accountable design.

Systems do not own moral or engineering responsibility. People and organizations do. Therefore, advanced automation requires stronger chains of authority, not weaker ones.

Corporate Accountability Is Part of the Architecture

Responsibility does not end with the engineering team. It extends into the organization.

Companies often describe AI in terms of innovation, transformation, or product capability. Those words do not define accountability. Real accountability requires the company to map authority across the lifecycle: who set the claim, who approved the scope, who accepted the evidence, who authorized deployment, who monitored field behavior, and who retained the power to intervene.

Without that map, the organization may still have reviews, safety statements, and escalation meetings. Yet it still lacks an actual architecture of responsibility.

That is why this topic belongs inside systems engineering. Corporate accountability is not external to the design. It is part of the design whenever the product can influence real-world behavior.

Responsibility Must Follow Authority

The practical questions are straightforward.

Who owns activation authority? Who validates runtime decisions? What happens when system behavior drifts? Who can narrow scope, suspend operation, or demand revalidation? What traceability links sensed conditions, model output, human review, and physical consequence?

These are not management abstractions. They form the operational grammar of responsible engineering.

Artificial Intelligence does not eliminate human responsibility. It increases the need to define it clearly. If engineers and organizations cannot trace responsibility back to explicit decision authority, then the system may still look advanced, but it has not yet been fully engineered.

That is the core claim of Article 3: responsibility must remain traceable to decision authority. Otherwise, AI integration creates responsibility without architecture.

Conclusion: Artificial Intelligence (AI Systems)

Artificial Intelligence does not remove responsibility from engineered systems. Instead, it makes responsibility architecture more necessary. Once AI influences system behavior, engineers and organizations must define who activates it, who validates decisions during operation, and who governs drift over time. Otherwise, responsibility becomes difficult to trace, and the system may operate without a fully engineered chain of accountability.

In that sense, the central issue is not AI capability alone, but the authority structure that surrounds it. If decision authority remains explicit, bounded, and reviewable, then responsibility can still be assigned and defended. However, if authority becomes vague or distributed without clear ownership, accountability weakens. Therefore, responsibility must remain traceable to decision authority if AI integration is to remain fully engineered.

References

Article 2: Artificial Intelligence Without Boundaries Is Not Engineering

The official NIST framework page for trustworthy AI risk management and links to the framework resources.:

Copyright Notice

© 2026 George D. Allen.

All rights reserved. No portion of this publication may be reproduced, distributed, or transmitted in any form or by any means without prior written permission from the author.

For editorial use or citation requests, please contact the author directly.

About George D. Allen Consulting:

George D. Allen Consulting is a pioneering force in driving engineering excellence and innovation within the automotive industry. Led by George D. Allen, a seasoned engineering specialist with an illustrious background in occupant safety and systems development, the company is committed to revolutionizing engineering practices for businesses on the cusp of automotive technology. With a proven track record, tailored solutions, and an unwavering commitment to staying ahead of industry trends, George D. Allen Consulting partners with organizations to create a safer, smarter, and more innovative future. For more information, visit www.GeorgeDAllen.com.

Contact:

Website: www.GeorgeDAllen.com

Email: inquiry@GeorgeDAllen.com

Phone: 248-509-4188

Unlock your engineering potential today. Connect with us for a consultation.